If you’re a developer or someone learning algorithms, you’ve probably come across the term Big-O Notation. It is a mathematical concept that helps analyze the efficiency of algorithms. This guide will explain Big-O in simple terms, using Python examples to clarify the concept. By the end of this article, you’ll understand why Big-O matters and how to use it in your coding journey.

Post Contents

What is Big-O Notation?

Big-O Notation measures how well an algorithm scales with the size of its input. It’s a way to describe the time or space complexity of an algorithm, or how fast an algorithm runs as the input grows.

Why Does Big-O Notation Matter?

As your data grows, the performance of your algorithm can change dramatically. Big-O helps you predict these changes and choose the best algorithm for the job. Imagine sorting a small list of 10 numbers—it doesn’t matter much whether you use a fast or slow sorting algorithm. But when you have millions of items, the choice of algorithm can mean the difference between a fast application and a painfully slow one.

Common Big-O Notations and Their Meanings

Here are some common types of Big-O complexities that you’ll encounter:

1. O(1) – Constant Time

- The algorithm’s performance does not change with the size of the input.

- Example: Accessing an element in an array by its index.

arr = [1, 2, 3, 4]

print(arr[2]) # O(1) operation2. O(log n) – Logarithmic Time

- The algorithm reduces the problem size by a factor with each step. Binary search is a good example.

- Example: Binary search in a sorted array.

def binary_search(arr, target):

low, high = 0, len(arr) - 1

while low <= high:

mid = (low + high) // 2

if arr[mid] == target:

return mid

elif arr[mid] < target:

low = mid + 1

else:

high = mid - 1

return -13. O(n) – Linear Time

- The algorithm’s running time grows linearly with the input size.

- Example: Searching for an element in an unsorted list.

def linear_search(arr, target):

for i in range(len(arr)):

if arr[i] == target:

return i

return -14. O(n log n) – Linearithmic Time

- This is typically the time complexity of efficient sorting algorithms like Merge Sort or Quick Sort.

- Example: Merge Sort.

def merge_sort(arr):

if len(arr) > 1:

mid = len(arr) // 2

left_half = arr[:mid]

right_half = arr[mid:]

merge_sort(left_half)

merge_sort(right_half)

i = j = k = 0

while i < len(left_half) and j < len(right_half):

if left_half[i] < right_half[j]:

arr[k] = left_half[i]

i += 1

else:

arr[k] = right_half[j]

j += 1

k += 1

while i < len(left_half):

arr[k] = left_half[i]

i += 1

k += 1

while j < len(right_half):

arr[k] = right_half[j]

j += 1

k += 15. O(n^2) – Quadratic Time

- Algorithms with this complexity become slow as the input size grows. It is common with algorithms that involve nested loops, such as Bubble Sort.

- Example: Bubble Sort.

def bubble_sort(arr):

for i in range(len(arr)):

for j in range(0, len(arr)-i-1):

if arr[j] > arr[j+1]:

arr[j], arr[j+1] = arr[j+1], arr[j]6. O(2^n) – Exponential Time

- These algorithms double their workload with every additional input, making them impractical for large inputs.

- Example: Recursive algorithms for solving the Fibonacci sequence.

def fib(n):

if n <= 1:

return n

else:

return fib(n-1) + fib(n-2)Big-O Notation Visualized

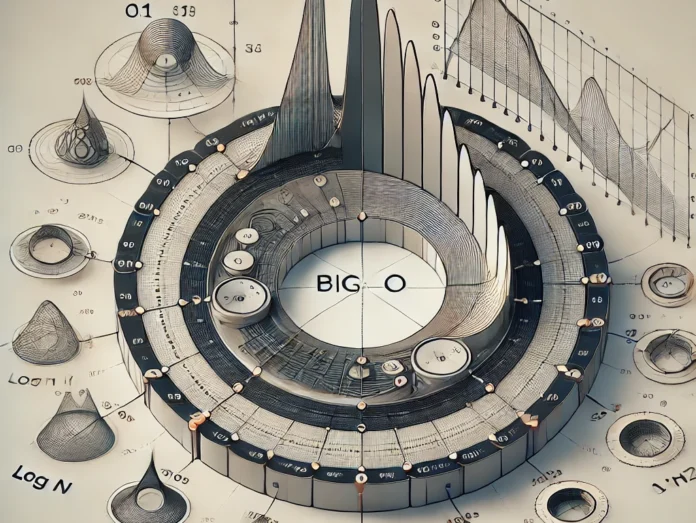

The graph of Big-O shows the growth rate of algorithms as input sizes increase. Constant time, O(1), remains flat, meaning performance stays the same regardless of input size. O(log n) grows slowly, while O(n log n) and O(n^2) grow much faster, making them less efficient for large inputs.

How to Analyze an Algorithm Using Big-O Notation

When analyzing an algorithm, follow these steps:

- Identify the loops

- If there are no loops, the algorithm likely runs in constant time, O(1).

- A single loop suggests O(n), while nested loops imply O(n^2).

- Look for recursive calls

- If the algorithm involves recursion, consider the number of recursive calls and their impact on time complexity.

- Combine operations

- If an algorithm consists of multiple operations, you may need to combine their complexities. For example, if you have an O(n) operation followed by an O(log n) operation, the overall complexity is O(n).

Real-World Applications of Big-O Notation

- Search Engines

Google uses algorithms that need to handle large amounts of data efficiently. Big-O analysis helps determine which algorithms scale best as data size increases. - Social Media

Platforms like Facebook and Instagram optimize their algorithms using Big-O to ensure their applications can handle millions of users at once. - E-commerce

Amazon uses algorithms to recommend products based on user activity. These algorithms are optimized for speed and efficiency, using techniques analyzed with Big-O.

Conclusion

Understanding Big-O Notation is critical for writing efficient code. It helps you predict how an algorithm will perform as data scales, allowing you to choose the best solution for any problem. By practicing with different algorithms and analyzing their time complexities, you can significantly improve your coding skills and develop efficient, scalable applications.